|

The company approaches this segment with a multi-layer model.

There may be no better example of the platform approach than how Nvidia has enabled enterprise AI. Like all its systems, this is built as a reference architecture with production systems available from a wide range of system providers, including Atos, Cisco, Dell, HPE, Lenovo and other partners. This is a turnkey system with all the software and hardware required for near- plug-and-play AI tasks. While this might seem like a crazy amount of performance, recommender systems, natural-language processing and other AI use cases are ingesting massive amounts of data, and these data sets are only getting larger all the time.Ĭontinuing with the platform theme, Nvidia also announced new DGX H100-based systems, SuperPODs (multi-node systems) and a 576-node supercomputer. The capability to go outside the system results in compute performance of 192 Teraflops. The new NVLink switch enables up to 256 GPUs to act as a single chip. Previously, NVLink was used to connect GPUs inside a computing system. On a related note, Huang also announced an expansion of NVLink from an internal interconnect technology to a full external switch.

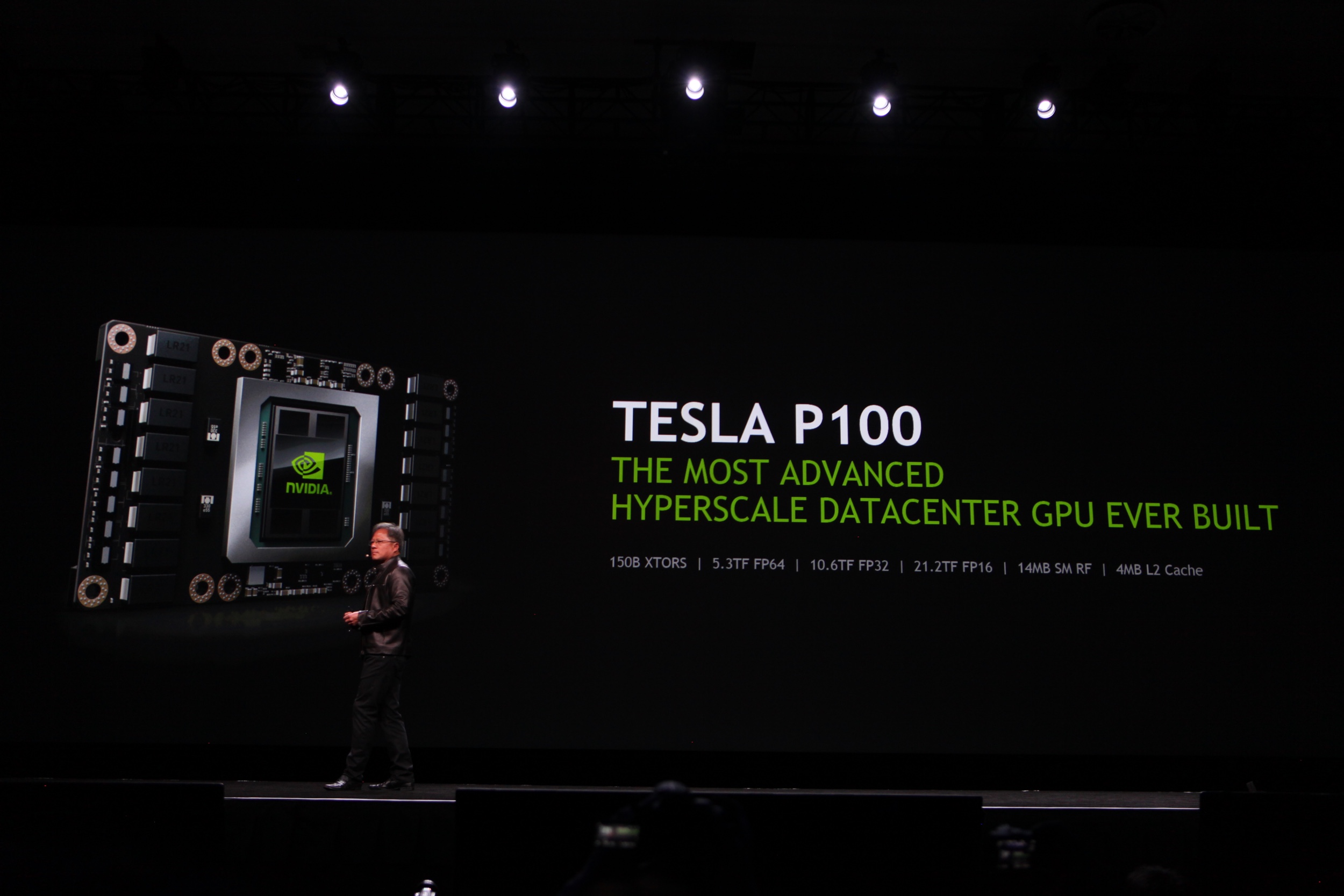

The chip alone provides massive processing capability, but multiple GPUs can be linked together using Nvidia’s NVLink interconnect, effectively creating one big GPU resulting in 4.9 Tbps of external bandwidth. Transformers are used extensively in natural language processing (NLP), since completing a sentence and understanding what the next word in the sentence should be – or what a pronoun would mean – is all about understanding what other words are used and what sentence structure the model might need to learn. Traditional neural networks look at neighboring data, whereas transformers see the entire body of information. For those with only a cursory knowledge of AI, a transformer is a neural network that literally transforms AI based on a concept called “attention.”Īttention is where every element in a piece of data tries to figure out how much it understands or needs to know about other parts of the data. The silicon features a whopping 80B transistors and includes a new engine, specifically designed for training and inferencing of transformer engines.

NVIDIA Hopper H100 Systems ‘transform’ AIĪs noted earlier, the core of all Nvidia solutions is the GPU and at GTC22, the company announced its new Hopper H100 chip, which uses a new architecture designed to be the engine for massively scalable AI infrastructure. This 2022 GTC keynote had many examples of this approach. It takes a platform approach and designs complete, optimized solutions that are packaged as reference architectures for its partners to then build in volume. Unlike all other silicon manufacturers, Nvidia delivers its product as more than just a chip. The company has advanced so far from mere chip design and manufacturing that Huang summarized his company’s Omniverse development platform as “the new engine for the world’s AI infrastructure.”

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed